Fooling Students into Not Fooling ThemselvesRaymond HallFour activities designed to engage students in the methods of science by showing how personal experience is not always to be trusted. Carl Sagan has argued with some success that “pseudoscience is embraced in exact proportion as real science is misunderstood”1. The current situation in the US is that a significant percentage of our fellow citizens believe in many topics either unsupported or even refuted by current evidence. A 2001 Gallup poll2 indicated that a third of Americans profess a belief in astrology (up from 27% in 1990), that 28% believe it is possible to converse with the dead (up from 18%), and fully half believe in extra sensory perception. As a science educator, I feel that enabling students to understand science involves an emphasis on how to discern scientific claims from that of the many pseudoscientific claims that abound in our media. What's behind the popularity of these claims? Purported reasons for why astrology, siances, and ESP are so widely believed are covered in a number of recent books3, and seem as numerous as the unsupported beliefs themselves. In my research and reading of these books I have come to implicate a common factor among those who hold to such claims; the inability to distinguish reliable evidence. I teach a general education course in critical thinking entitled Science & Nonsense. The course involves a study of the nature of the scientific enterprise, and how science, and the knowledge obtained from science, affects our lives and shapes our understanding of the world. I also seek to develop students' critical thinking skills through the study of past and current controversial topics that involve science or claim to be supported by science. The aim of the curriculum then is to enable students to tell the difference between reliable evidence and hearsay, reason and delusion, science and nonsense! Science as a SafeguardWhat follows is a set of classroom activities and topics designed to actively demonstrate to students how much of what they commonly take as evidence is unmistakably unreliable. The ability to distinguish reliable evidence first involves a better understand of ourselves, and the many ways we are prone to misinterpret our perceptions. With these activities I try to convince students that, in the words of Richard Feynman4, “A first principle [in science] is that you must not fool yourself—and you are the easiest person to fool!” Of course it is not a simple matter getting folks to question what they think they know. I have found that students of critical thinking are initially uncomfortable with the use of reason, since most are defensive of their current beliefs, and often express surprise at the idea of being asked to support their beliefs rationally. One aspect of science that I initially stress is that scientists employ methods developed to mediate what I will call pitfalls of perception, the ways in which our common sense intuition fails us. Many of these pitfalls are documented in the books and articles of Thomas Gilovich5, a professor of psychology at Cornell University. Gilovich suggests that the amazing complexity of our cognition, of how the human mind takes in and processes information, makes it inevitable that there will be ways in which the system can subtly betray us. States Gilovich: “At one level, [that common sense is so wrought with pitfalls] should not come as a surprise: It is precisely because everyday judgment cannot be trusted that the inferential safeguards known as the scientific method were developed;”6 safeguards such as control samples, blind (and double blind) studies, and peer review. I have found that exploring these pitfalls of perception is an effective way of engaging students to critically examine their beliefs. The following four activities describe why safeguards are needed and employed, and underscore how wrong things can go if one does not adequately guard against such pitfalls. Subjective Validation and AstrologyMost realize that it is easy to read more into a written passage than was intended by the writer, but feel that this doesn’t present much of a problem. I demonstrate that it can cause alarming misinterpretations with the following activity, most recently popularized by the famous magician and activist against pseudoscientific thinking, James Randi7. Checking up on one’s horoscope appears to be a strong American pastime with almost every major newspaper carrying the daily celestial assessments of sun sign astrology. A major claim of sun sign astrology is that one’s personality is largely determined by the position of the sun against the ecliptic constellations at one’s time of birth. Our first activity explores the most prevalent evidence for this claim —that it works!

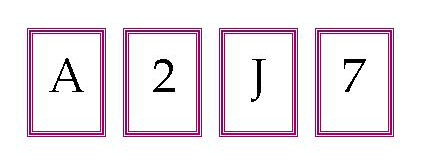

The psychologist Bertram Forer was the first to describe this phenomenon, which he labeled subjective validation, and utilize it in his class to demonstrate this inherent bias in assessment of claims about one's self8. We seem to always notice and count the hits and for the most part ignore the misses. Subjective validation, and the wrong impression it creates, has an important role in why people have such firm convictions about astrology, as well as the fantastic claims of palm reading, graphology, self help ideologies, personality inventories, and most paranormal means of personality revelation. The pitfall of subjective validation seems to be inherent in how our mind works, and is just one of many known ways our brains can systematically mislead us. The next pitfall we will look at is how we are fundamentally handicapped when assessing probabilities and degrees of randomness. Misinterpretation of random events and phone ESPYou happened to be thinking about your mother; suddenly the phone rings. It’s your mother! Amazing! Come to think of it this has happened to me in the past. There must be some kind of uncanny ESP connection involved. Research in psychology has demonstrated that the mind has difficulty in correctly interpreting random patterns in time. We seek the unusual happenstance and mark them. The times when we think of our mom and she doesn’t call, and the reverse, where she calls and she didn’t happen to be on our mind at the moment, are by contrast non-events and don’t have the same impression on our memories. So after a while the false impression of ESP connection is created out of what is an inevitable overlap of common events. Equally troubling is our inability to comprehend short range statistics. A widespread belief among basketball players, professional and blacktop, is that of the hot hand5. Michael Jordan and others have spoken on what they feel is the fact that once they have made a shot, they are more likely to make the next; their hand is hot. Conversely, the belief goes, if they miss a shot their hand has gone cold and they are more likely to miss the next. The more shots made (or missed) in a row the hotter (or colder) the hand. This to many a ballplayer’s mind describes why shots sometimes occur in streaks. The question here is understanding what a random distribution looks like. Consider the following flips of a coin (an independent process): XOXXOXOOXOOXXOXOXXOX and XOXXXOXOXXXXXXOXXXOX Both series are of course equally probable, but most people would say that the second is too orderly, with too many heads ('X's) in a row, and therefore less likely. This is in contradiction to the math! In 20 tosses one would expect 10% of time to get a six in a row somewhere in the sequence, 25% of the time a five in a row, and a 50-50 chance of having at least a four in a row. The next activity demonstrates this "streaky" nature of random sequences. Note that during a basketball game each player attempts around 20 shots.

Tom Gilovich and Arnos Tversky demonstrate that people have faulty intuition about what random sequences look like. In fact, they have actually researched the hot hand with data from the 76er's basketball team. Gilovich and Tversky found that there is no meaningful statistical correlation between shots, and that a particular player's shooting average is the same for shots made after a basket to those after a missed shot 9. This analysis has fairly straight forward statistical arguments and is ideal for class presentation in conjunction with the above activity.

Figure 1. Optical illusion as an analogy to the cognitive clustering illusion. Even once one verifies that the brim of the hat is equal to the height, the eye continues to perceive the hat as taller than wide. In general most conceive a random distribution to alternate back and forth much more than the math demonstrates; sequences of six in a row seem to most beyond chance. The propensity to assign a causal connection to such random sequences is called the clustering illusion, another pitfall of perception related to misinterpretation of random events. Gilovich argues that this bogus intuition is a cognitive illusion much like the optical illusion of the hat in Figure 1 in that even once one verifies that the brim of the hat is equal to the height, the eye continues to perceive the hat as taller than wide. So here is yet further instances of the mind's tendency to misinterpret. But wait, it gets worse! Expectation Bias and Seeing Things Here is a short account of a famous instance of expectation bias, or just plain jumping to conclusions. In 1903, during a time of major discoveries of many new forms of radiation, Professor Rene Blondlot of the University of Nancy reported the discovery of a remarkable new radiation he labeled N-rays10. He claimed these rays were emitted by all things except green wood and some treated metals, and had similar penetrating properties akin to X-rays. A number of other French scientists had corroborated his findings by duplicating his experiments. In one experimental arrangement, the N-rays were said to refract through a metal prism, and that a spectrum of dark and light N-ray bands could be cast. Instead of an eyepiece the spectrometer had a vertical thread treated with luminous paint. N-ray bands were detected by Blondlot, determining by eye the faint glow of the string as an assistant called out angles and rotated the prism through a set of intervals. The journal Nature sent American physicist James Wood to investigate the amazing claims of the N-ray experiments. Wood was invited into Blondlot’s lab for a demonstration, and while waiting in the dark for Blondlot’s eyes to adjust, Wood quietly removed the metal prism from the apparatus. Although the prism was in Wood’s pocket, thus completely disabling the apparatus, Professor Blondlot nevertheless called out the presence and absence of N-rays exactly where he had reported and expected them to be11. The detection mechanism of Blondlot’s experiment had an unfortunately large subjective aspect, that of visually distinguishing a very feeble illumination, literally on the threshold of detection. Could Blondlot’s strong expectation to see the string glow really manifest in his perception, so that he really saw a glow when none were present? Many have come to this conclusion.

In the case of Blondlot, perhaps the expectation came from his considerable investment in his own hypothesis, or was reinforced by his lab assistants not wanting to contradict their esteemed professor. Whatever the case, the lesson for the students is that his experimental procedure screamed out for the application of a blind test. If Blondlot had asked his assistant to do in a controlled fashion what Wood had imposed on him, N-rays may never have seen the printed page. There are other instructive and entertaining incidents in the annals of physics; one of which I highly recommend is the story of Martin Fleischmann and Stanley Pons' announcement of cold fusion in 1989. The account as told in Robert Park's book Voodoo Science13 gets to the very heart of the problem: signal on the threshold of detection above noise, subversion of peer review, lack of use of control samples (what is the result if you do not use heavy water in your vessel?), and of course a wide berth for expectation bias. The human pitfall of expectation bias is sometimes referred to as wishful thinking, and plays a role in the acceptance of many questionable beliefs including N-rays, cold fusion, ancient astronauts, claims of perpetual motion ("over unity") devices, and many alternative healing claims, to name a few. Confirmation Bias and Overlooking OpportunitiesRelated to expectation bias is another human pitfall, that of confirmation bias. Inductive reasoning has preeminence in science, and early on I explain how disconfirming evidence is more powerful that confirming evidence in deciding among competing hypotheses. Yet it seems almost unnatural for us to seek to disconfirm, as illustrated in the next activity.

The most telling aspect of this activity is that it shows that even when we don’t have a vested interest in the validity of the statement (no wish or need for the statement to be true), we still seek the confirmatory evidence, however more powerful disconfirmation might be. This pitfall of perception is well documented in the psychology literature14. Once students have been made aware of these pitfalls, I have them research a number of popular topics with extraordinary claims: ancient astronauts, the Loch Ness monster, abductions by extraterrestrial visitors, palmistry, psychic detectives, bigfoot, spontaneous human combustion, out of body experiences, etc., the list is long! My students learn to recognize the potential role of these pitfalls in the evidence presented by the proponents for these claims, and I have found that these activities leave a strong and lasting impression. You, yourself, are the easiest person to fool... Once my students are convinced of this, I feel there is hope that many will graduate with the ability to discern the difference between astrology and astronomy.

Notes and References: 1. Carl Sagan, The Demon-Haunted World: Science as a Candle in the Dark. New York, Ballantine Books, 1997. 2. Frank Newport and Maura Strausberg, "Americans' Belief in Psychic and Paranormal Phenomena is up Over Last Decade", Gallup News Service, June 8, 2001, http://www.gallup.com/poll/releases/pr010608.asp 3. Theodore Schick and Lewis Vaughn, How to Think About Weird Things, Critical Thinking for a New Age, Mayfield Publishing Co., California 1995 also, Michael Shermer, Why People Believe Weird Things : Pseudoscience, Superstition, and Other Confusions of Our Time, W H Freeman & Co., 1998 and, Nicholas Humphrey, Leaps of Faith: Science, Miracles, and the Search for Supernatural Consolation, Copernicus Books, 1999 4. Richard Feynman, Edward Hutchings (Ed), " Cargo Cult Science" in Surely You're Joking, Mr. Feynman!: Adventures of a Curious Character, W.W. Norton & Company 1997 5. Thomas Gilovich, "Some Systematic Biases of Everyday Judgment", Skeptical Inquirer, pg. 231 March/April 1997 6. Thomas Gilovich, How We Know What Isn't So; The Fallibility of Human Reason in Everyday Life, The Free Press, New York 1991 7. James Randi, Secrets of the Physics, NOVA, PBS video, 1993 8. Forer, B.R., "The Fallacy of Personal Validation: A classroom Demonstration of Gullibility," Journal of Abnormal Psychology, 44, (1949) 118-121. 9. Arnos Tversky and Tomas Gilovich, "The Cold Facts About the Hot Hand", Chance, Vol 22, No. 2 (1980) 18-21 10. Mary Jo Nye, "N-rays: An episode in the history and psychology of science", Historical Studies in the Physical Sciences 11 (1), (1980) 127-156. and, Irving Langmuir and Robert N. Hall, "Pathological science" Physics Today 42 (10), (1989) 36-48. 11. Robert W. Wood, "The N-rays", Nature 70, (1904) 530-531. 12. Terrance Shaw, "Spider Sniffing, Oobleck, Candles and Other Skeptic Creating Activities", Proceedings of the 1998 California Science Teachers Association Annual Meeting, (unpublished) 13. Robert L. Park, Voodoo Science : The Road from Foolishness to Fraud, Oxford Univ Press, 1999 14. P.C. Watson, "Reasoning", in B.M.Foss(Ed.), New Horizons in Psychology. Harmondsworth: Penguin. |